I’ve been writing blog posts on and off since 2009, and it’s been a fascinating experience. By now, I’ve learned a lot about researching, writing articles, getting feedback, and finding out what users do and don’t like. Blogging is an acquired skill; one I’ve become better at only in the past year or so because I’ve actually dedicated time towards writing on a regular basis.

When I first started blogging, I was pretty bad at it. I didn’t have any frequency, and I didn’t know how to choose topics so I just randomly wrote things about iPads, my family, movies I’d watched, and things like that. Eventually, I learned how to pick good titles, how to condense complicated ideas into concise posts that summarize an issue, and how to steer the conversation in the right direction to help drive conversions and sales. I’ve also watched as the traffic on the websites I blog on has seen huge jumps in organic search traffic because of it. It’s pretty fun, actually.

But one thing I’ve never quite been able to get a handle on is how helpful a blog post is for someone who actually does find and read it. I have always allowed comments, so that’s been an incredibly valuable tool because people can write questions right there at the bottom of the post. However, what about those people who don’t comment? How do I get a measurement from all those people who read my posts, then leave without commenting, never to be heard from again?

I had wondered for long enough that I finally decided to do something about it: I created a small survey where people could tell me whether the blog post they were reading was helpful or not. There are several tools that do this, but I decided to try Qualaroo, (formerly known as KissInsights) because I could get a free trial for a month. Simple enough! I created a free trial, and created a question, and was up and running in about twenty minutes.

(This is what the survey window looked like. Simple, right?)

All you have to do to set it up is put a snippet of JavaScript on your website’s footer then choose what kind of questions you want to ask. In my case, I just created the super-simple one above and deployed it on the top 5-10 posts with the highest volume of traffic. After running the test for about a month, here were the results (see below).

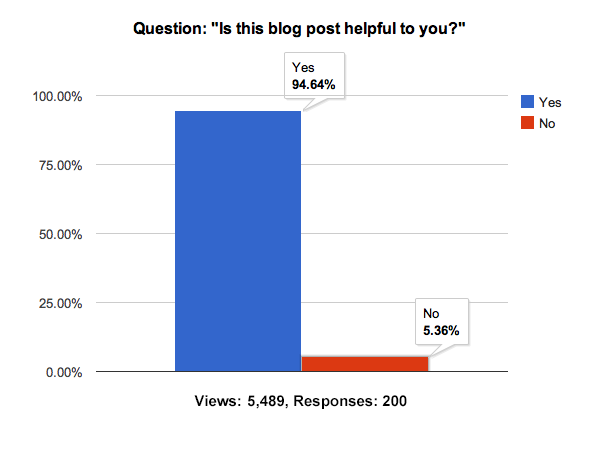

As you can see, from the time I turned the test on until I turned it off, the survey was shown 5,489 times, and I received exactly 200 responses. That means my overall response rate was 3.64%. I have nothing to compare this to, so I’m not sure whether this is a “good” or “bad” response rate. But I do know this, the actual responses were fascinating to see: a whopping 94.64% of respondents said “Yes,” the blog post(s) they were reading was helpful. Hooray! Only 5.36% of respondents voted “No.”

I actually do have something to compare those percentages to real life! Getting a 94% on anything is a good thing, and while this wasn’t a scientific study, to me it at least proves that a super-majority of our website visitors like what they’re seeing. Do the responses of 200 people to a simple yes/no question tell me what I need to do with my website? No. But this is a little taste of good, quality feedback that can turn into something actionable as part of a bigger plan.

I ran this simple test because I’m always looking for ways to make our website better for our visitors, answer their questions more effectively, and help them find what they want. I highly encourage you to try this on your own website as well. My Internet Marketing motto for the past few years has been “Data Wins Arguments,” and I frequently tell people “start testing, and stop arguing.” So if you’ve never run a test like this, you should—it’s a simple way to dip your toes into the water.

If you want to try it, just visit Qualaroo and (or any similar service), create a question, let it run for a month or so, and see what happens. Then leave a comment here and let me know how you did! I would love to hear what kind of interesting feedback you get, and I’d be even more interested in figuring out what that data means and how best to make changes in response to it. You can always contact us if you find that the numbers are showing you may have problems, but you’ll never know until you start testing. So give it a shot! Good luck!